The project explores how shared situation models arise and adapt over time in communication about environments. In this context, it deals with the interaction of the formation and adaptation processes with the interlocutor’s visual perception. This is explored in human-human as well as human-robot scenarios, combining psycholinguistic experiments and computational modelling.

Second Period: July 2010 - June 2014

Humans often communicate about entities in their immediate surroundings using spatial descriptions and – more precisely – projective spatial relations such as “A is in front of B” or “C is to the left of D”. Although the interpretation of such spatial expressions seems to be straightforward to humans, artificial systems such as robot companions suffer from a fragile perception of the visual scene and an inherent uncertainty in spatial descriptions. Spatial LayoutThis project deals with these issues in an integrated fashion, i.e. acquiring a semantic scene interpretation from the robot’s 3D perception, interpreting and generating verbalized spatial referential expressions, and managing the interaction of the tasks for the disambiguation and enrichment of each corresponding representation. The selection and processing of appropriate spatial frames of reference (FOR) is a key factor in combining both domains and has been a focus of this project in the current funding phase, both in terms of psycholinguistic experiments and computational modelling.

In an empirical study, we addressed two questions: (a) does the presence or absence of a background in the scene influence the selection of a FOR, and (b) what is the effect of a previously selected FOR on the subsequent processing of a different FOR. Our results show that if a scene includes a realistic background, this will make the selection of the relative FOR more likely. We explain this phenomenon by assuming that the presence of a background stimulates an embodied mental simulation of a real scene. Regarding the second question we found both a higher (with the same FOR) and a lower accuracy (with a different FOR) compared to a control condition, while for the response latencies, we only found a delay effect with a different FOR. Given our assumptions this suggests the presence of a priming effect and indicates that FOR selection is not only accompanied by inhibition of the non-selected FOR but also by a higher level of activation of the selected FOR. More generally, our results show that priming not only has facilitatory effects on referential communication, but can also slow us down or decrease our communicational efficiency, depending on the sequential context in which utterances occur.

In an empirical study, we addressed two questions: (a) does the presence or absence of a background in the scene influence the selection of a FOR, and (b) what is the effect of a previously selected FOR on the subsequent processing of a different FOR. Our results show that if a scene includes a realistic background, this will make the selection of the relative FOR more likely. We explain this phenomenon by assuming that the presence of a background stimulates an embodied mental simulation of a real scene. Regarding the second question we found both a higher (with the same FOR) and a lower accuracy (with a different FOR) compared to a control condition, while for the response latencies, we only found a delay effect with a different FOR. Given our assumptions this suggests the presence of a priming effect and indicates that FOR selection is not only accompanied by inhibition of the non-selected FOR but also by a higher level of activation of the selected FOR. More generally, our results show that priming not only has facilitatory effects on referential communication, but can also slow us down or decrease our communicational efficiency, depending on the sequential context in which utterances occur.

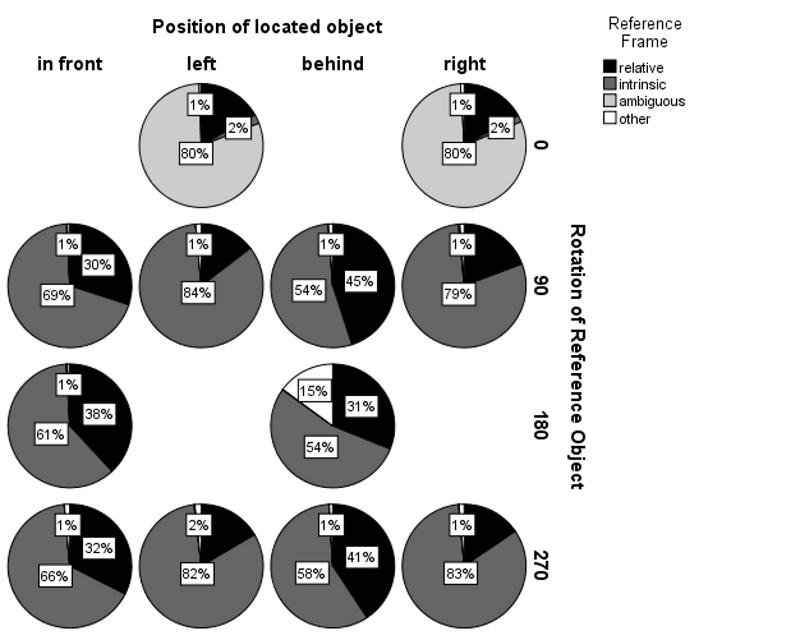

It has been shown that – in human-robot interaction – an addressee-centred frame of reference is mostly preferred, there is a frequent inherent ambiguity between relative and intrinsic (object-centred) FORs, as demonstrated both in simulated and real environments. Expecting regularities in FOR selection, we used objects from a domestic domain to determine the frequency of occurrence of the relative and intrinsic FOR for different pieces of furniture. By varying the rotation of the relatum (reference object) and the position of the locatum (located object) we simulated different perspectives on object configurations. Spatial verbal descriptions were elicited in an online experiment and categorized according to FOR use. We found significant effects of the rotation of the relatum and of the position of the locatum on FOR selection.

It has been shown that – in human-robot interaction – an addressee-centred frame of reference is mostly preferred, there is a frequent inherent ambiguity between relative and intrinsic (object-centred) FORs, as demonstrated both in simulated and real environments. Expecting regularities in FOR selection, we used objects from a domestic domain to determine the frequency of occurrence of the relative and intrinsic FOR for different pieces of furniture. By varying the rotation of the relatum (reference object) and the position of the locatum (located object) we simulated different perspectives on object configurations. Spatial verbal descriptions were elicited in an online experiment and categorized according to FOR use. We found significant effects of the rotation of the relatum and of the position of the locatum on FOR selection.

A conversational service robot that is expected to understand and produce spatial verbal descriptions needs to deal with both kinds of FORs. In order to enable our robot to deal with general cases, we have proceeded beyond the state-of-the-art in semantic mapping in the following directions. First, we continued work from the first funding phase towards an improved holistic approach to recognizing scene types based on 3D features which will be further exploited in the next funding phase for propagating top-down expectations about furniture and object categories. Secondly, we have implemented an initial approach to furniture recognition that provides possible anchor points for intrinsic FORs. Thirdly, based on such furniture hypotheses, we proposed an object-centred graph representation that accumulates information provided by spatial verbal descriptions in order to disambiguate referential expressions (including FOR selection) as well as uncertain scene information, such as furniture categories and furniture orientations.

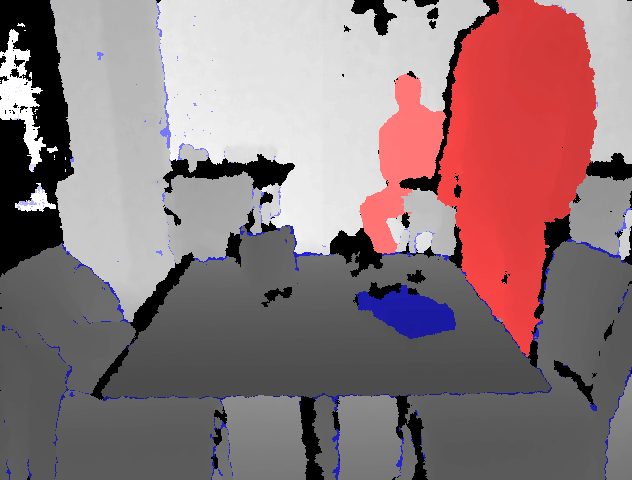

Articulated Scene ModelThe underlying semantic mapping approach also deals with movable unknown objects by utilizing an articulated scene model approach. This registers scene changes caused by a human operator (e.g. moving a cup from the kitchen table to the dinner table). Similarly to other related work, the scene change detection is based on an egocentric representation. In order to exploit these observations in other situations and viewpoints, we systematically combine them with an allocentric metric representation of the environment so that movable parts of a scene and a dynamic 3D background model can be constructed on the fly from different positions and viewpoints.

Articulated Scene ModelThe underlying semantic mapping approach also deals with movable unknown objects by utilizing an articulated scene model approach. This registers scene changes caused by a human operator (e.g. moving a cup from the kitchen table to the dinner table). Similarly to other related work, the scene change detection is based on an egocentric representation. In order to exploit these observations in other situations and viewpoints, we systematically combine them with an allocentric metric representation of the environment so that movable parts of a scene and a dynamic 3D background model can be constructed on the fly from different positions and viewpoints.

First Period: July 2006 - June 2010

Language processing involves the formation of a representation of the matters talked about – besides a representation of the language itself. For a dialogue to be successful, it is essential that both interlocutors develop partially aligned models of the situation under discussion. We assume that this holds also for communication about complex, visually perceived environments and propose that they are mentally represented by selective situation models based on given spatial descriptions and 3D perception.

The main goal of the project is to investigate the process of building a shared perceptually grounded mental model of the situation under discussion through conversation and to exploit the principles of human-human situation-model aligning for human-robot communication. This will enable a mobile robot to more effectively understand descriptive language, to deal with partially unknown situations, to ignore irrelevant information and to act in cluttered environments. We intend to examine factors that lead to an alignment of such representations in situational models. We focus on relational and perceptual components of such situation models that are grounded in a common visually perceived environment. Several aspects of the question how shared situation models arise will be addressed in the project by combining psycholinguistic experiments with theoretical and conceptual work in cognitive science, and computational modelling. These include the interplay between automatic alignment processes and resource-limited elaborate construction, the essentially resource-free use of these integrated situation models once they are formed, and the role of pan-situational world knowledge (schemata, categorisation levels, typicality) invoked by language use.